Searle's Inside Man

The Lure of the False Cognitive Proxy (CRA, Part One)

If you are already familiar with Searle’s Argument and the main rebuttals, please skim this post and read the next one.

Introduction

Searle’s Chinese Room Argument (CRA), published in 1980, is one of the most infamous arguments in the philosophy of the mind.

I think the argument is without merit, and my heart sinks a little whenever someone invokes it, but it is of interest anyway, because it reveals an important conceptual trap – which I’ll call the Lure of the False Cognitive Proxy. A similar misuse of a proxy actually plays a key role in most anti-physicalist or anti-functionalist arguments, but the trap is easier to see in Searle’s set-up than in many related thought experiments, which means the CRA is a good starting point for assessing an entire family of anti-physicalist arguments, ranging from Leibniz’ Mill through to Nagel’s bats, Jackson’s Mary, and Chalmers’ zombies.

Here is a useful summary of the CRA provided by Searle:

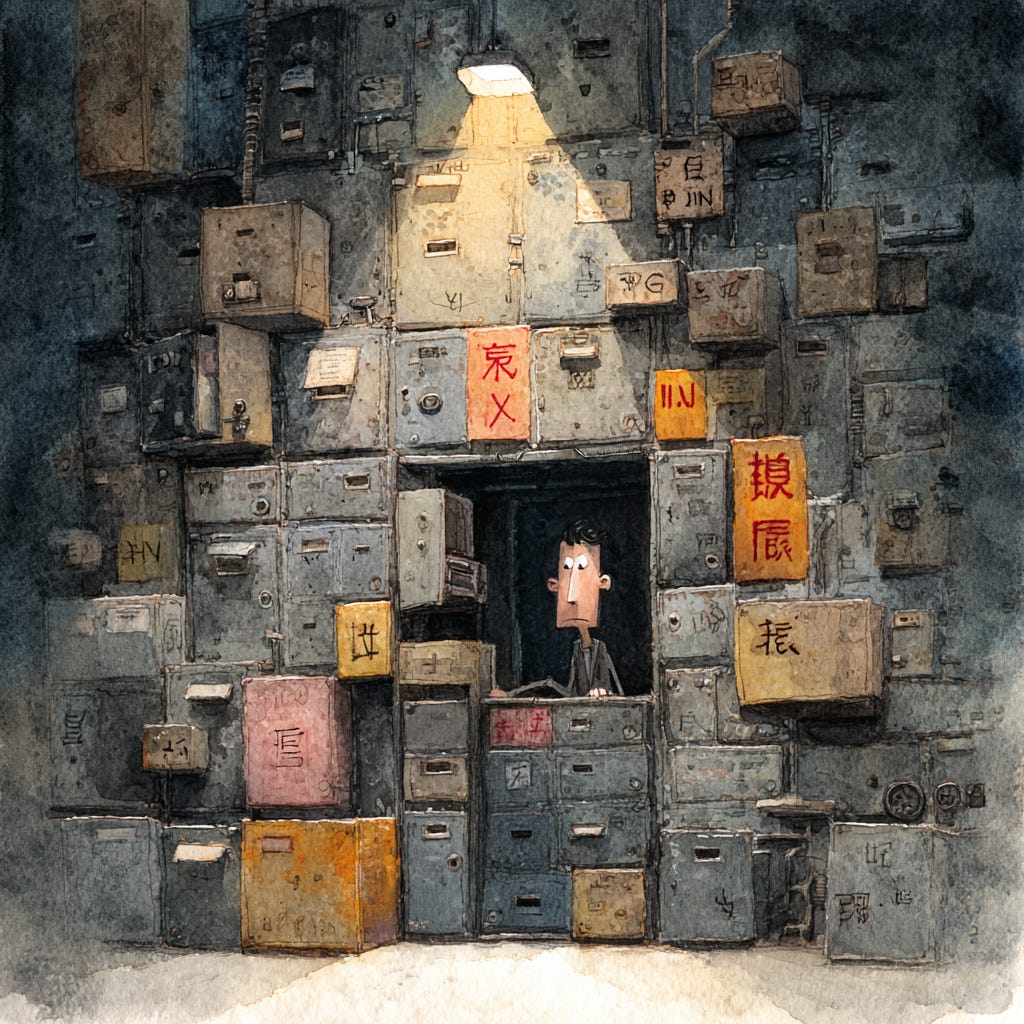

Imagine a native English speaker who knows no Chinese locked in a room full of boxes of Chinese symbols (a data base) together with a book of instructions for manipulating the symbols (the program). Imagine that people outside the room send in other Chinese symbols which, unknown to the person in the room, are questions in Chinese (the input). And imagine that by following the instructions in the program the man in the room is able to pass out Chinese symbols which are correct answers to the questions (the output). The program enables the person in the room to pass the Turing Test for understanding Chinese but he does not understand a word of Chinese.

[…] The point of the argument is this: if the man in the room does not understand Chinese on the basis of implementing the appropriate program for understanding Chinese then neither does any other digital computer solely on that basis because no computer, qua computer, has anything the man does not have.”

Adapted from the Stanford Encyclopedia of Philosophy (https://plato.stanford.edu/entries/chinese-room/)

Searle’s primary argument was that the program produces the outer appearance of understanding, but the human operator, supposedly afforded a revealing look inside, could not even say what the conversation was about, so genuine understanding must be missing. Searle reasoned that understanding must be something more than the mere cranking out of any algorithm, and he added a tagline: syntax does not give rise to semantics.

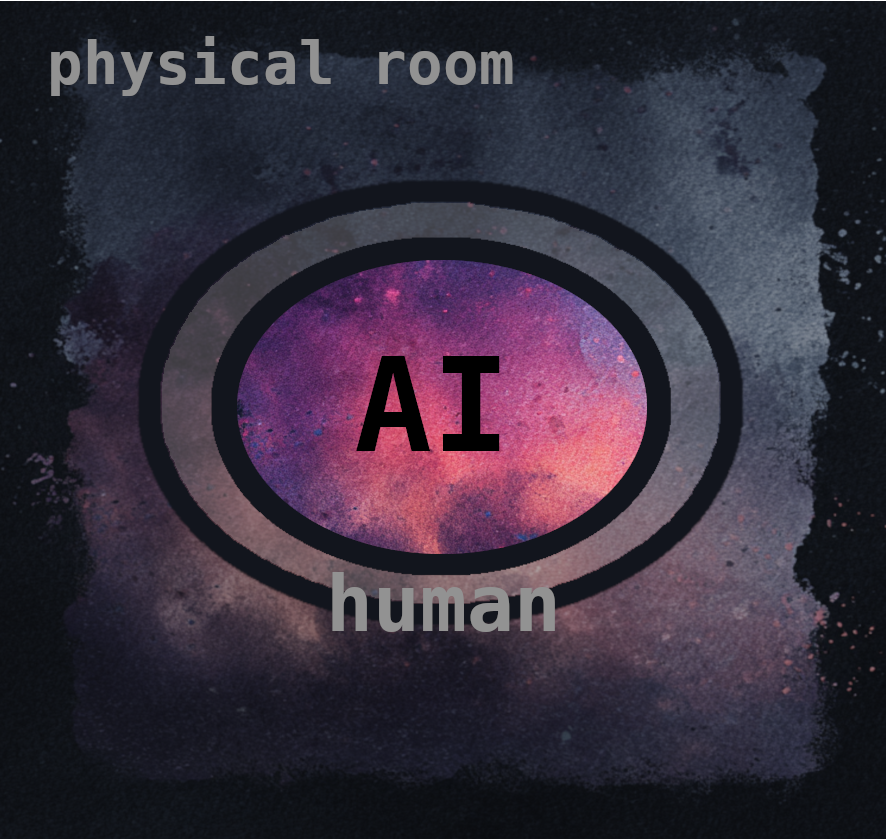

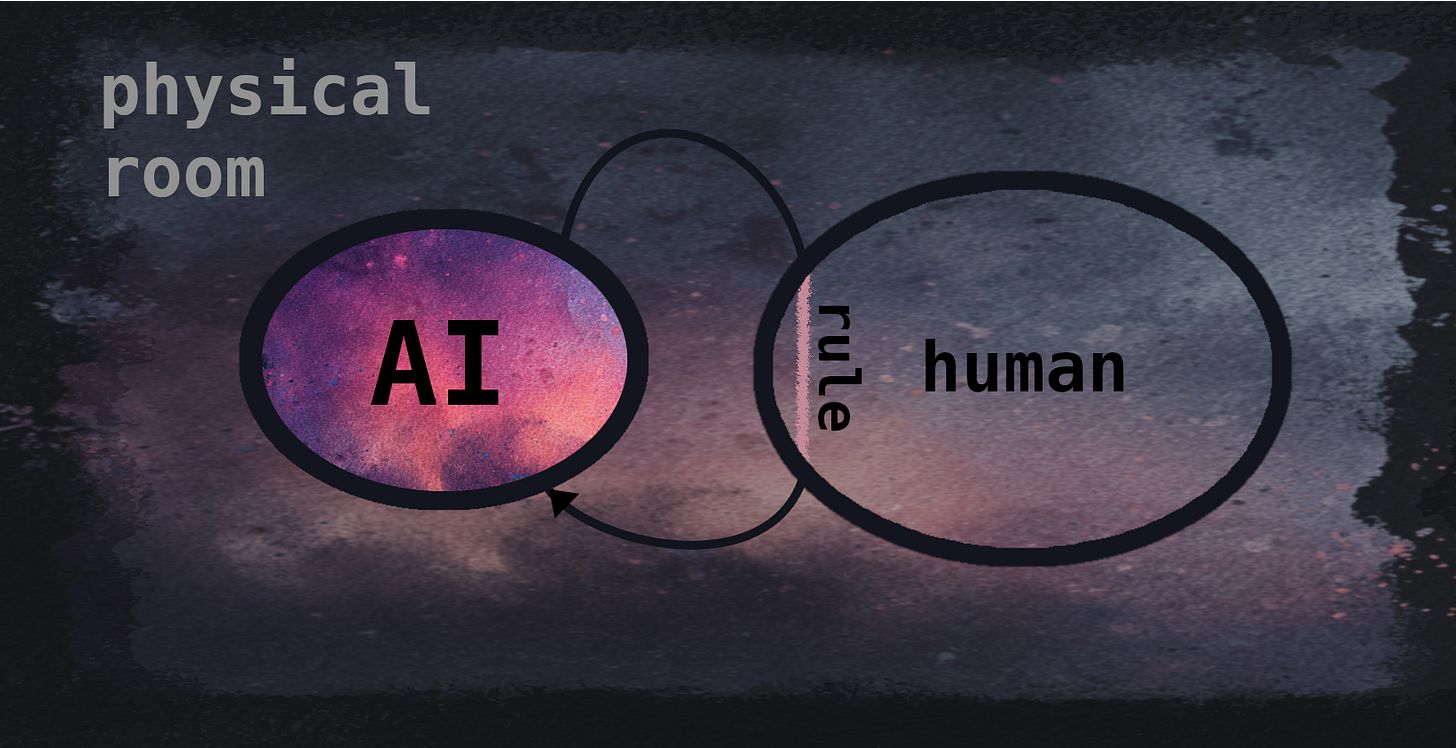

A key feature of the CRA, never adequately acknowledged by Searle, is that it relies on the use of one cognitive system (the human Operator) as a proxy for assessing a different cognitive system (the Chinese Room), despite abundant evidence that the proxy is inadequate.

The structure of the argument hides its reliance on a proxy so well that most people miss it, but the entire argument rests on a conflation between: 1) what the Operator knows and understands thinking independently of the written instructions, using their own brain; and 2) what is known and understood by a distinctly different cognitive system, the Room, which is physically embodied in paper but totally reliant on the algorithm being implemented by the human proxy.

The watershed issue is whether or not the Operator’s judgment of the Room’s understanding is formed within a cognitive system that is sufficiently sensitive to the Room’s algorithm, and therefore positioned to pass judgment. I think it’s not; Searle assumes it is. But I’ve not seen evidence that Searle has ever asked himself the relevant questions needed to resolve this key point.

Where does the recognition of incomprehension take place, both physically and functionally?

Does that recognition primarily depend on the cognitive architecture and activity of the Room, or the Operator?

Is there any conceivable sense in which the Room commits an introspective self-check for understanding, or does this cognitive exercise happen entirely within the human Operator?

Re-imagine Searle’s set-up with two input slots (Chinese and English), and two corresponding output slots. As in the original CRA, Chinese inputs get processed by the algorithm, but now we can also post English questions, which go straight through to the Operator to be processed by a human brain.

Suppose that, as in Searle’s original paper, we input a Chinese story about burgers, and the Room can discuss the story and its implications. Functional comprehension is intact. We then post the English question: “Do you know what we’ve been discussing?” We trace the causal connections, which stay within the confines of the human’s skull until the Operator picks up a pencil and writes: “Were we discussing something? You know, I had a suspicion this was some sort of language algorithm. Chinese, right?”

We post the equivalent Chinese question: “你知道我们一直在说什么吗?” (Do you know what we’ve been discussing?) Again, we trace the causal connections. This time, both cognitive systems get involved, though the Operator’s cognitive involvement will be non-linguistic in nature and relatively superficial, for reasons that I’ll consider below (and in the next post). The Chinese output will be something like: “当然知道.” Of course I know.

If we select the wrong slot for the language of the input, we get useless results. A Chinese question in the English slot might lead to the output: “That means nothing to me. Was that meant for the other slot?” An English question in the Chinese slot will be treated as invalid or produce a helpful redirection, depending on the sophistication of the AI.

Searle’s CRA paper can be interpreted as an invitation, in English, to speculate on what the Chinese-speaking algorithm understands. Suppose we post Searle’s paper through the English slot. If you traced the resulting causal connections, you would observe that all the cognitive processes actually engaged in diagnosing the lack of understanding stay within the human’s skull. The processes are the ones that the usual reader of the CRA is supposed to empathise with, while passing judgment on the Room’s algorithm. That algorithm serves as the target of the judgment, so it might be represented indirectly, in an impoverished form, by some neural ensembles within the Operator’s brain, but otherwise it doesn’t get involved. In that form, and in those neuroanatomical sites, it has no cognitive efficacy. The Room is totally reliant on the Operator, which creates the illusion of cognitive overlap, but the Operator is not at all reliant on the Room. The Operator follows the algorithm while processing Chinese questions, creating a transient homology, but that homology disappears when the Operator considers Searle’s paper and introspects to assess what they have understood.

Of course, Searle doesn’t actually use an English slot when seeking judgment on the algorithm – if he did, the flaw in the CRA would be obvious – but he doesn’t need to. His readers are expected to use the only cognitive system available to them, and to empathise with the fictional human Operator, who would answer by using their biological brain, not the Room’s algorithm. (Has any reader, in the history of the CRA, ever used a paper algorithm to decide what to think about Searle’s argument? Please let me know in the comments if that’s how you reached your own opinion.)

Now, if you ask the Operator what it thinks about the Room’s understanding, you might get an answer back from an Operator who has had recent cognitive contact with the Room, and that Operator might have pretensions of insight, like Searle, but the causal provenance of the final English output would always show that the Operator has actually responded to Searle’s invitation by consulting their own independent cognitive system, not by consulting the Room via its algorithm. The human insights into the understanding embodied in the algorithm have actually been formed within the human skull of the reader, empathising with the brain of the Operator, in an attempt to gauge the understanding embodied in the Room’s algorithm.

But nothing in the CRA dictates that we have to assume an idiotic Operator with pretensions of insight.

A smarter Operator would concede that they are in no position to pass judgment on a system that they are merely facilitating via impoverished, low-level access. A sensible answer might emerge from the English output slot as follows: “Sorry, I’m only getting a useless, keyhole view of the Room’s cognition, so I don’t know what it understands. The inputs look like Chinese characters to me, but I have no understanding of what any of them mean; it’s like trying to review a movie after watching it one pixel at a time. Perhaps you should try asking the Room what it understands? But don’t forget to translate the paper into Chinese, first. Good luck. Hopefully we can speak again in a few years, when you get the Room’s response. I’d be interested to see what it says.”

Two systems, with different abilities and two different perspectives. Not really worthy of decades of debate.

The Mill Family of Arguments

All of this started with Gottfried Leibniz. He was one of the first people to envisage an AI when, in 1714, he asked his readers to imagine a machine that mimicked the functions of the human brain. Not surprisingly for his time, he took it as obvious that such a machine could never be conscious. He proposed that the machine could be blown up to industrial proportions, such that his readers might walk around inside it, as though visiting a windmill. Such a visitor would see bits and pieces of clockwork pushing one another, argued Leibniz, but no unifying experience. On the other hand, we are clearly conscious, so we must be more than a set of physical mechanisms.

Leibniz’ Mill was the forerunner of the CRA and philosophical zombies, and he became the first hardist by committing its original sin: judging a cognitive system from a perspective incapable of providing adequate epistemic insights, and then proposing ontological extras to bridge the gap.

I will take up the Mill argument in a later post, but it, too, involves a false cognitive proxy. The human Visitor, who only sees a small portion of the Mill at any one time, never assimilates with the cognitive structure of the Mill, and is therefore in no position to adjudicate on its consciousness.

Searle’s argument was based on the same intuitions, but Searle directed his anti-functionalist sentiment at the emerging field of AI, which at that time (the start of the 1980s) was producing some early clues that the AI researchers might be approaching understanding in machines. To some extent, clever exploitation of syntactical structures in natural language was being used to fake understanding so, for many people, Searle’s syntax/semantics tagline seemed apt. Much of the understanding being attributed to early AI systems was indeed being projected onto those systems erroneously – the words being manipulated by the machines were subsequently interpreted by humans, who attributed the usual meanings to the manipulated words, without those meanings playing a key role in producing the output. (Ironically, meanings assigned to the words within the brains of humans were being projected onto the machines, in an inversion of Searle’s error; the CRA projects the human’s lack of understanding onto the machine.)

Searle had a legitimate point, of sorts, though his justifiable scepticism about clunky, symbol-based AIs got swallowed up by the CRA’s fallacious reasoning, and the issues got more confused because Searle extended his false-proxy logic to justify a broader denial of computational approaches to consciousness in humans, as Leibniz had done.

With the recent arrival of language‑capable AIs, some people have returned to the CRA for insights into whether large language models (LLMs) understand what they say. My own view is that LLMs embody much more understanding than the old-fashioned AIs of the 20th century, but LLMs still do not embody understanding at a very deep level. They have not acquired the sort of knowledge that comes from navigating the physical world, for instance, and they almost certainly lack the phenomenology (and the underlying functional mechanisms) of conscious introspection, so they can’t watch themselves consciously grasping the meanings behind their own words. LLMs also don’t automatically back those meanings with imaginary reconstructions in a virtual, theatre-like mind, though we could envisage architectures in which they did.

Searle makes somewhat similar claims about the lack of deep meaning in LLMs, so it might seem that I’m agreeing with him, but I see no reason why future AIs could not reverse all of these deficits. More importantly, I think the CRA has no utility in clarifying these issues – except, perhaps, as a cautionary tale, by demonstrating a confused way of approaching meaning and consciousness in AIs (and, by extension, subjectivity in general).

There are, of course, many counter-arguments to the CRA. Most of these are explored in the CRA entry in the Stanford Encyclopedia of Philosophy (SEP). It’s 21k words long, but well worth a read; the author even-handedly lays out many rebuttals of the CRA, along with Searle’s responses.

One line of rebuttal outlined in the SEP entry, the “Virtual Mind Reply”, approaches the main point I want to make in this post, because some other authors have also proposed that the Room constitutes a distinct cognitive system different to that of the Operator, but I think this rebuttal can be expressed much more strongly than it usually is, and the lessons learned can be applied much more widely.

Consider the following pairs:

· Leibniz’ Mill and the Visitor

· Nagel’s bats and some frustrated bat-scientists

· The colour-savvy cognitive system described in Mary’s textbooks, and Mary herself

· Searle’s Chinese-speaking Room, and the English-speaking human operator

· Chalmers’ zombies and a generic reader conceiving of a zombie

In all of these classic thought experiments, an observing cognitive system (the Judger) fails to recreate or observe something within itself while contemplating a different, observed cognitive system (the Judged). In the Zombie Argument, this failure is voluntary, but, in the others, the Judger generally tries to switch perspectives and fails. Each of these famous thought experiments involves adopting the third-person perspective of the Judger, and then using that perspective to highlight the strangeness or ineffability or non-mechanical or non-syntactical nature of some subjective feature in the Judged. The reader is encouraged to join the Judger in doubting the capacity of the observed functional processes to “give rise” to whatever is deemed to be missing in the Judged. Instead of considering functional reasons for the divergence between the two cognitive systems (and the realistic epistemic limits faced by the Judger), the proponents of these arguments usually treat the imaginative exercise as constituting a direct, infallible insight into a single system, the one being observed. The proponents then propose that something non-functional must account for the shortfall.

For his subjective feature of interest, Searle initially chose what might be called genuine semantic understanding, which he wanted to distinguish from the behavioural mimicry of understanding, but this has direct, transparent parallels with Chalmers’ distinction between genuine phenomenal experience and the bland phenomenal judgments of zombies (and similar controversial distinctions in the qualia debates).

One reason to dissect the CRA, then, is that its relevance extends well beyond the nebulous notion of “understanding” in AIs. It provides a template for a series of unreliable conceptual moves that can be applied to other elements of subjectivity; the parameters of Searle’s thought experiment can be tweaked to draw related conclusions about AI consciousness, human brains, biological consciousness, and qualia. Searle himself has presented versions of his Room that amount to paper zombies, and Chalmers has invoked Room-like set-ups in his presentation of the Zombie Argument. Searle has also applied similar thought experiments to dispute the potential for “qualia” in non-biological systems (whatever qualia are supposed to be). In other words, all of these arguments are conceptually related. I will consider them collectively in a later post, with a more detailed look at their shared flaws, including their tendency to slide into epiphenomenalism.

The CRA stands out from some of these other arguments because Searle made the objective-subjective distinction more salient by bringing in a second language. If we consider the CRA sympathetically, we are not left with the vague sense that some higher-order subjective feature might be absent in the Room; we don’t end up wondering whether we have correctly conceived of a behaviourally normal exterior hiding a meaningless interior, as we must when we conceive of zombies. Putting ourselves in the Operator’s position, we simply predict that we wouldn’t understand a word of the Chinese conversation. We’ll have processed the shapes of squiggles, looked up a lot of complex rules, and performed a lot of calculations, but we might not even recognise that we have been engaged in a conversation, much less gleaned the content.

Many of the other Mill-derived arguments lack this clarity. We can be genuinely unsure whether we have accurately imagined a zombie or reached a defensible opinion on the sentience of Leibniz’ Mill, and it is always a little unclear what a zombie or Mill is supposed to lack. It can be unclear how we should think about the referent of a redness concept, and whether that referent changes as we switch our conceptual mode of reference from the form adopted by Mary pre-release to the more typical post-release cognitive style.

But we all have a clear sense of what it is like to find a foreign language semantically opaque.

The high cognitive salience of the human Operator’s ignorance has been a key part of the success of the CRA – but that salience also helps to expose the flaw in this style of reasoning. If “genuine understanding” is such a nebulous entity that it is not characterisable in functional terms, and if “genuine understanding” is so epiphenomenal it could go missing without behavioural consequence in a Zombie Room modelled off a human brain, then its absence in Searle’s Operator is suspiciously obvious. Indeed, the Operator’s lack of understanding is readily explicable in functional terms, which forces us to wonder how an explicable functional divergence could end up being sold as an insight into the deficits of functionalism, and why anyone might ever think it useful or coherent to posit an epiphenomenal property to explain a gap that makes itself so easily felt.

If there are two cognitive systems that don’t even speak the same language, why would we ever think that one can be used as a proxy for the other?

The answer seems to be that most people, even critics of the CRA, treat Searle’s set-up as a unified system with all of its secrets laid bare. The fact that a second cognitive system is involved is overlooked, as is the idea that the second cognitive system might face significant limitations. Empathising with the Operator, we can see exactly what’s going on; the entire algorithm lies before us, and it cannot make a single move that does not happen directly under our gaze.

Or so Searle wants us to believe.

The CRA’s Rhetorical and Psychological Tricks

For those who don’t find the CRA persuasive, it can seem quite odd that it has generated so much discussion and apparent respect.

Consider, for instance, the excited endorsement of Julian Baggini (2009), who said that Searle “came up with perhaps the most famous counter-example [to functionalism] in history – the Chinese room argument – and in one intellectual punch inflicted so much damage on the then dominant theory of functionalism that many would argue it has never recovered.” (From the SEP entry.)

I think this endorsement is ridiculous, but it should be conceded that the CRA is a brilliant piece of memetic engineering, and it has proven well-adapted to propagate among the unwary. It’s therefore worth considering some of the ways it massages the necessary intuitions.

Like Leibniz, Searle cleverly positioned the human proxy physically inside the AI, which creates a strong sense that the Judger has been afforded full, revealing access to the Judged cognitive system. But instead of tapping into the objective perspective through the eyes of an uninvolved Visitor wandering through the Mill and perhaps seeing only part of it, Searle invited us to imagine that we are the human Operator of the Room, seeing everything. This boosts the intuitive effect: the Judger’s cognition is causally involved in every step of the Judged cognitive process, and the human brain briefly holds each functional element of the other system within itself, serially, as the computation proceeds. Practically all of that exposure would be promptly forgotten, leaving no lasting cognitive trace in the brain of the human operator, but the Judger has been briefly in contact with each part of the Judged, and that seems to be enough to carry the day rhetorically.

Note how intimately enveloped the two systems seem, from a naïve perspective: every functional aspect of the AI passes cognitively within the human, who is in turn physically within the room with its paper embodiment of the AI algorithm. In combination, these features encourage the reader to accept Searle’s assumption that the experiences of the human Operator are a valid proxy for assessing the Room. This intimacy is largely illusory. There is a gap between the reader and the hypothetical operator, which can be arguably bridged by empathetic imagination, and another much more significant functional divergence – manifesting as a comprehension gap – between the Operator and Room.

By making the Room’s algorithm entirely dependent on the Operator, Searle invites us to consider whether the Room has anything not possessed by the human brain executing it (“no computer, qua computer, has anything the man does not have”). But if the comprehension of the human is being used as a proxy for the AI, then this is approaching the issues from the wrong direction. He should be asking: Does the man have a perspective the computer does not have? Does the human have any cognitive substrate that is not engaged in processing the Room’s algorithm, and what role does that private, non-Room, human cognition play in the CRA? Does the linguistic information captured within the algorithm have any realistic chance of being transferred into the cognitive system that will ultimately decide what has been understood and, if not, why not? Searle’s argument relies on the lack of such a transfer, but it also relies on the reader treating the Operator as having transparent, complete access to the Room, on the grounds that the Room has complete dependence on the Operator. The effective functional separation of the two systems is obscured by an arrangement involving one-way functional dependence.

It should be conceded, here, that the obligatory involvement of the Operator with every step of the Room’s algorithm does have important cognitive side effects in the brain of the Operator. Not only does this arrangement force the Operator to observe every single step, it creates heightened appreciation of the low-level functional connections that ultimately make the algorithm work. Consider the different levels of involvement we might specify for a human judging an AI: 1) casually observing parts of the algorithm, like Leibniz’ Visitor, without necessarily seeing all of it; 2) passively watching every line of an algorithm scroll past as it is being executed; 3) repeatedly clicking a button that lets a single line of the algorithm get executed, as when a programmer steps through a program looking for a bug; 4) personally providing the causal medium that executes each line, as in the CRA. Human attention will be best captured by the last of these, but the greater cognitive demands of personally executing each line will also, potentially, slow execution to the point that some patterns will no longer be apparent. Low-level engagement will also tie up cognitive resources that might have been better utilised thinking about higher-level features of the algorithm. How well would you understand a novel’s plot and characters after laboriously dissecting each sentence into its grammatical components, identifying each part of speech, and writing the result down, compared to someone who just read the novel at normal speed?

It should also be conceded that stepping through an algorithm can often reveal insights, and one of those insights could be that the program was moving bits of text around in a clunky mechanical way that did not distinguish “The cat sat on the mat” from “The bork sat on the zork.” When Searle published the CRA, some people might have pictured the Operator gaining this sort of insight… But the CRA rests on a failure of comprehension, and failing to understand an algorithm is not a particularly worthwhile insight. Complex algorithms can obviously be cognitively impenetrable, despite close involvement with each step, especially if the architecture is of the modern, connectionist sort. That impenetrability is not evidence of a lack of understanding in the algorithm and, indeed, we can expect penetrability to go down as the algorithm’s cognitive sophistication increases, in a complete reversal of Searle’s assumptions.

In giving the observer a causal role, elevating them to Operator instead of Visitor, Searle was probably inspired by a similar language-based thought experiment, “The Game” (Mickevich, 1961), in which 1400 math students in a stadium functioned as a digital computer, performing calculations on binary numbers and passing each other results. They learned the next day that they had been collectively translating a sentence from Portuguese into their native Russian. The protagonist concludes that even the most perfect simulation of machine thinking does not capture the thinking process itself.

Searle’s set-up offers a strong rhetorical advantage over a stadium of students: all of the functional steps have notionally occurred within the grasp of a single observer, not diluted among hundreds. No single student in “The Game” saw the full operation of the gymnasium AI, none of them had the full picture, and none appears suitable to be considered a valid proxy. Also, translation of a single sentence could be achieved without a rich model of semantic relations, so the students will have seen, at most, a tiny fraction of the AI that would be required for full, ongoing, contextually appropriate translation between two languages.

Searle’s Operator, we might assume, has no such excuse, observing everything – but instead the Operator faces other serious epistemic limitations, which have proven more difficult to recognise.

The downside of using a single human operator is the excessive slowness, leading to a massive dilution of cognition across time, and this has drawn some comment from critics of the CRA. By itself, the speed of computation is unlikely to be a decisive factor. (If the phenomenology of understanding is dependent on speed, but there are no internal clocks linked to real physics and no external temporal cues, how could the speed of an algorithm ever be detected on introspection?) Even if the Operator’s calculations were massively sped up, they would still be occurring in a cognitive system different to the one being assessed, with inadequate connections from the algorithmic calculations to the rest of the Operator’s cognition. More importantly, the speeds of the Judger and the Judged are hopelessly mismatched; at any one time, the results of almost all prior calculations would be forgotten by the human brain, which is expected to pass judgment on a cognitive process that is moving with glacial relative slowness. If the whole process were sped up proportionally, so that the Room communicated at normal speed in real-time, like a chatbot, the human forgetting would also be sped up, and the Room would still remain a slow-moving mystery to the Operator.

Searle also made some rhetorically effective (but ultimately misleading) comments about the difference between syntax and semantics. The syntax/semantics distinction is not what primarily fuels the reader’s sense that the human Operator lacks understanding, which instead relates to the functional divergence between two different cognitive systems, but this pithy rhetoric synergistically combines with the main intuitive thrust of the argument. The unwary might be tempted to think that Searle has not only demonstrated that AIs have no capacity to achieve meaning, but also explained why.“Oh, computers only have syntax, not semantic understanding. That’s why the Room doesn’t understand anything.”

Imagine this exchange, a century ago. “Why can’t computers win at chess?” “Because computers just follow rules, and there’s more to chess than just following the rules.”

The suggested answer is wrong, because “rules” here is doing double work: the rules being followed in the implementation of the chess program are not just the rules of chess, and following decision rules of sufficient complexity turns out to be a very good recipe for chess-playing ability, vastly exceeding human skill.

In much the same way, the sort of syntax that is neatly cleaved from semantics when we consider whole sentences has nothing much to do with the computational structures underlying the production of meaningful (or apparently meaningful) sentences.

Searle’s syntax/semantics rhetoric is effective because it is built on a half-truth. Searle is sneaking in a controversial resolution to the issue of whether semantics can ever be captured algorithmically by appealing to an uncontroversial distinction between syntax and semantics, familiar to us all at the sentence level. He gets away with this because the rest of the argument appears to support the same conclusion, at least for readers who accept the validity of the human proxy, and the AIs of the time were, to some extent, exploiting syntactical tricks to fake semantics.

Searle’s argument arrived at a time when the conceptual distance between sentence-syntax and the underlying “syntactical” processes of AI algorithmic cognition was not as vast as it is today. Searle’s tagline applies to symbolic AIs of the 1970s, in which the variables being manipulated had clear real-world interpretations: one symbol might mean “burger” and another might mean “money”, and so on. Some very primitive early experiments in AI even involved some canned sentences that were indeed indifferent to the meaning of what each word meant, and Searle tapped into the reader’s intuitive recognition of the brittleness of those early systems, and their reliance on meanings that were attributed to the AIs from the outside. The plausibility of the CRA was therefore boosted by the suspicion that a hand-coded world model with clunky symbols could never capture the rich semantic relations of the real world. The exploits of some early AIs were probably given higher credence than they deserved because natural language manipulation, achieved with shallow syntactical approaches, can triggers semantic associations in the humans who read the AI output. If we take away the additional meanings attributed by enthusiastic AI researchers, the remaining claim on meaning, intrinsic to the AI, might be rudimentary or non-existent. In that context, we might be inclined to forgive Searle’s choice to look at the perspective of a man in a room who has been rendered incapable of inferring additional meanings because he has been linguistically handicapped.

The problem is that his perspective does not have the universal applicability that Searle’s logic assumed.

Since the surprising successes of LLMs, Searle’s CRA has been cited to support the idea that LLMs also lack genuine semantics. Several authors (including some Substack authors, such as Maggie Vale) have countered with arguments that LLMs are no longer relying on syntactical trickery, and their underlying statistical models imply a rich world model. It is important to note, though, that anyone arguing about the evidence for understanding in algorithmic AIs is mistaken if they think this evidence refutes (or supports) the central logic of the CRA: the CRA is simply not sensitive to any of the internal implementation details of the cardboard AI.

Transferring Searle’s argument to the era of contemporary AIs would require some minor adjustments, but none of these would change the overall thrust of the argument. As Searle said, “if the man in the room does not understand Chinese on the basis of implementing the appropriate program for understanding Chinese then neither does any other digital computer …” Within an LLM, many thousands or millions of variables in subtle combinations might be needed to represent a single real-world entity, and most of those variables would also be re-used in the representation of other entities, with a diffuse many-to-many relationship between the representational substrate and the implicit modelled world, instead of the rigid one-to-one relationship that Searle probably had in mind.

Searle’s central argument would remain the same if we put aside his symbol-based AI and put the Operator in charge of a paper version of GPT5. The human operator would still be focussing on a low-level algorithm, oblivious to what is represented by the Chinese symbols. If the understanding of the Operator is accepted as a proxy for the AI’s understanding, then the LLM does not understand anything, and no behavioural evidence or architectural considerations are relevant.

The syntax/semantics issue is complicated, though, and these brief comments do not cover the full story. In the interests of space I will leave further discussion for another post. (The SEP entry covers the issues quite well.)

Ultimately, though, Searle’s tagline does not connect with the real reason the CRA relentlessly reaches its conclusion. It merely provides a conceptual stopping point where fans of the CRA feel they have arrived at a profundity, backed by the insights provided by the false proxy.

Indeed, if algorithmic AIs of the future eclipsed our own intelligence, then the CRA would still suggest that their superhuman intelligence was associated with no actual understanding. If we didn’t understand something – some new branch of science, say, or some conceptual problem too difficult for mere human minds – we might take our problem to such an AI, and we might expect it to explain the concepts to us, but the superior explanatory ability of the advanced AI would apparently not constitute “genuine understanding”. According to Searle’s logic, it would only be after the AI had taught the concepts to us, and its explanations had entered our relatively stupid biological brains, that the concepts would be genuinely understood for the first time (and we would constitute the sole repository of any “genuine understanding”, even if we continued to rely on the AI for further mentoring and refresher courses for some time afterwards).

This interpretation seems very odd, and it suggests that the CRA is massively overpowered, leading to unreliable, implausible conclusions.

When Searle proposed the CRA, he probably believed such hyperintelligent AIs would never arrive; I expect him to be proven wrong in a few years (unless humanity puts a moratorium on AI development). By then, of course, Searle’s Room would need to be the size of a very large planet, if not a star, and the human operator would need life‑prolonging technology to complete a simple conversation, so the thought experiment would be even more strained than it was in 1980.

It would be a shame if the CRA contributed to complacency about AI risk by encouraging the assumption that AIs are intrinsically incapable of understanding anything.

Common Rebuttals

The CRA has generated considerable debate over the years, and I recommend the SEP entry for a more detailed review of the literature. This post won’t be able to deal with all of the thoughtful rebuttals that have been published, but there are a few dominant themes.

Some authors have conceded that the Room’s understanding is limited, but only because the AI being implemented would have to be relatively primitive to be amenable to human operation; more sophisticated algorithms could achieve understanding. Fans of the CRA will simply insist that, in principle, more complex algorithms will use up more of the Operator’s time, but not give them any more insight.

Some have argued that, if the Room were placed inside a robot engaging with the physical world, controlling a body with needs rather than processing textual questions, this would imbue its models with genuine meaning, linking symbols to their referents in a rich causal relationship.

There is some merit to this idea, in terms of what is needed to produce an AI with a rich world model, but I think it ultimately misses the mark. Even if the training of future AIs included an embodied robotic life, it would still be the case that the final model – now more sophisticated – could be instantiated in a Searlean Room, where it could be processed by a second cognitive system that failed to relate to the AI’s improved grasp on the real world.

Some authors have resisted Searle’s conclusion by arguing that the Operator might come to understand Chinese. I find this deeply implausible: the very factors that lead me to say that the human Judger is a poor proxy for the Judged AI lead me to doubt that the human would learn Chinese by the methods described. If we assume the Operator has unrealistically good memory and an immense, superhuman capacity for logical interpolation, then perhaps this result cannot be ruled out entirely. This sort of response is unnecessary, though, because we can reject Searle’s conclusion even if we accept his assumption that the Operator completely lacks understanding of Chinese. The Operator’s understanding simply isn’t relevant.

Suppose that the human Operator is stipulated to be brain-damaged. They remain a reliable follower of written rules, so the Room behaves normally, but the Operator has no short term memory beyond the last rule. What value would we place on the judgments of this clearly defective Operator? Surely, in this revised version, the human opinions are now obviously useless? A defender of the CRA might concede that, yes, this brain-damaged Operator is particularly poorly placed to judge the Room’s cognition, so, to reach Searle’s conclusion, we would have to stipulate that the Operator has very high intelligence – with this IQ-guarantee in place, all the usual Searlean conclusions follow. But the brain-damaged Operator is only facing a more extreme version of the epistemic limits already faced by any normal human, who cannot possibly memorise billions of parameters and see all of their functional interrelationships.

The Room’s understanding does not change when we notionally dial the Operator’s cognition up or down, or we view the Operator’s prospects of personally decoding the inputs with greater or lesser optimism. Our verdict of what the Room understands should not be dependent on the Operator’s understanding at all; as will be discussed in the next post, they are different cognitive systems.

Most rebuttals of the CRA come from people willing to accept Searle’s baseline assumptions that: 1) the Room converses normally in Chinese; 2) the Operator lacks understanding of Chinese; and 3) this situation would persist despite future improvements in the Room’s algorithm.

One popular objection in this group points out that the scale of the imagined mechanisms is completely wrong, leading us to underestimate the system’s capacity for understanding.

When we imagine a room with a single human operator, we are not really imagining the whole AI; we are imagining a tiny part, perhaps only a few calculations being scribbled on a single card with everything else no more than a stage prop, and we are inferring – unreliably – that understanding is lacking in the much larger system that we haven’t bothered imagining in any detail. This quantitative shortfall alone accounts for the strong intuition that the algorithmic manipulation of variables is insufficient for capturing meaning. The structures being imagined could not capture meaning.

There is what we could call a zooming bias involved in most anti-functionalist arguments: the reader of the argument zooms in on a few generic examples of the computational substrate, often simplifying the judged cognitive system by many orders of magnitude; the reader subsequently imagines an inadequate functional system and concludes that the simplified version could not sustain the subjective feature of interest.

The most popular rebuttal to the CRA is probably the System Reply, which argues that it is the total system that understands the Chinese conversation; the understanding is embodied in the room, the cardboard, the rules, the calculations, and all their associated inter‑relationships. The lack of understanding within one component – the monolingual human operator – does not tell us anything reliable about the complete cognitive system.

I think this rebuttal is a major improvement on Searle’s original interpretation, and it approaches the main argument made in this pair of posts, but it somewhat misses the critical point that the human component of the “Total System” is not merely cranking out the Room’s algorithm, but also providing the perspective from which that algorithm is being judged, and that perspective is inadequate. It is not the Total System that diagnoses the lack of understanding within itself; this judgment is notionally reached within the relatively isolated cognitive island of the Operator.

To escape Searle’s confusion, we need to distinguish between: 1) concerns about which cognitive structure is being assessed and found wanting, and 2) concerns about what cognitive structure is doing the assessment. The System Reply, despite its good intentions, primarily draws attention to the algorithm that is being judged. Fans of the CRA can simply agree that they are supposed to judge the Total System. For them, nothing changes. The physical props don’t understand Chinese, and neither does the human. These fans are not ignoring the physical room and the cardboard and the instructions that constitute the System; they imagine themselves sitting in the room, seeing it all clearly. What they are not recognising is that the Total System houses two independent cognitive structures, and the lack of understanding in the Operator component plays a key role in the argument by performing the judgment from a compromised, limited perspective.

The CRA achieves its rhetorical effects by offering what looks like a revealing inside view of the Total System. If we automated the Operator, but kept the Total System’s architecture otherwise unchanged, we would not modify the System’s total understanding of Chinese, but we would lose all illusions of having a “man on the inside”, witnessing the alleged semantic emptiness of the algorithm as it unfolds. If we appropriately consider the human operator as a causal component within a larger system, but we also inappropriately continue to trust the Operator’s limited perspective when judging that larger system, without considering the functional role of the Operator’s own cognition, we will miss the massive functional split within the system, which is what drives the CRA to its conclusion.

Ultimately, it is the acceptance of the Operator’s understanding as a valid proxy for the Room ‘s understanding that does the intuitive work of the CRA. Reasserting that the Total System really does have understanding will do little to convince those who have unwittingly accepted the proxy.

In a counter-response to the System Reply, Searle has insisted that the human could be imagined as memorising this vast structure, while still not understanding anything. This counter-response is misguided, because the Room’s algorithm and data, once memorised, would still not have been cognitively assimilated to make a unified system; it would constitute a second, Chinese-speaking cognitive system improbably held within the same skull as the monolingual English speaker, much as when I run an virtual Android emulator on a Window’s PC. But this response also shows a complete disregard for issues of scale. If the algorithm is sophisticated enough to perform convincing natural language processing, the Operator’s cognitive capacity would fall short by many orders of magnitude. Stuffing an entire new cognitive system within another is simply not possible, to the extent that it makes very little sense to consult our intuitions on the question of what would follow from this impossibility. We can have no basis for any sensible opinion when we have completely disregarded all cognitive limitations.

In the Other Minds Reply, some authors have pointed out that behavioural evidence of the Room’s understanding should be good enough, because such evidence constitutes the sole reason we ever attribute meaning to human minds beyond our own. I don’t accept this argument, because I don’t think it is automatically obvious that minds are substrate-independent. I think that the reason we tend to be more generous when recognising other biological minds is that we extrapolate from our own situation, not because we all take external behaviour as the decisive indicator. At any rate, this response does not account for the way Searle has apparently shown that external behavioural assessments and the insider view can be discordant. Only when we realise that the human proxy is useless can we account for that discordance, and then fall back on the fact that behavioural assessments necessarily constitute a large part of what we must consider in attributions of understanding. (I would also add that cognitive architecture is important, but that is an argument for another day.)

The Epiphenomenalism Reply is one of the strongest objections to the CRA, especially when applied to Searle’s wider attack on functionalist accounts of the human brain: Searle’s concept of “genuine understanding” leaves him chasing an epiphenomenon that exerts no causal effects.

One issue with an epiphenomenalist conception of “genuine understanding” is that the resulting notion of pure semantics would be invisible to natural selection, and therefore have no reason to evolve. As noted in the SEP entry:

At the same time, in the Chinese Room scenario, Searle maintains that a system can exhibit behavior just as complex as human behavior, simulating any degree of intelligence and language comprehension that one can imagine, and simulating any ability to deal with the world, yet not understand a thing. He also says that such behaviorally complex systems might be implemented with very ordinary materials, for example with tubes of water and valves.

This creates a biological problem, beyond the Other Minds problem noted by early critics of the [CRA]. While we may presuppose that others have minds, evolution makes no such presuppositions. The selection forces that drive biological evolution select on the basis of behavior. Evolution can select for the ability to use information about the environment creatively and intelligently, as long as this is manifest in the behavior of the organism. If there is no overt difference in behavior in any set of circumstances between a system that understands and one that does not, evolution cannot select for genuine understanding. And so it seems that on Searle’s account, minds that genuinely understand meaning have no advantage over creatures that merely process information, using purely computational processes. Thus a position that implies that simulations of understanding can be just as biologically adaptive as the real thing, leaves us with a puzzle about how and why systems with “genuine” understanding could evolve. Original intentionality and genuine understanding become epiphenomenal.

A more profound issue, not covered in the SEP entry but directly related to a major theme of this blog, is that Searle himself could not defensibly diagnose an epiphenomenal extra within himself. Genuine understanding would be cognitively invisible, because it would not change any neural firing patterns. The epiphenomenal nature of Searle’s “genuine understanding”, therefore leads to a variant of what I have called The Zombie’s Delusion, or others might call the Paradox of Epiphenomenalism: all beliefs in epiphenomenal properties are necessarily inspired by non-epiphenomenal properties, and all such beliefs would be held with the same conviction if they were wrong. That means the inferences and conceptual chain leading to those beliefs are unreliable.

These concerns about Searle’s closeted epiphenomenalism are important, but they immediately raise a new question. If “genuine understanding” is not the source of Searle’s belief in it, then what is the source? Note that this question has direct parallels across the entire family of Mill-derived arguments. What is the actual functional source of belief in the special extras proposed by Leibniz, Jackson and Chalmers, given that these authors have all proposed entities or properties that exert no cognitive effects?

The answer, I propose, lies in the cognitive divergence between Judged and Judger, which the CRA fails to acknowledge. If a divergence between two different cognitive systems is misinterpreted as arising between two views of the same system, and it is also regarded in ontological terms, then the extra will necessarily end up being cast as epiphenomenal. It will seem to be real and will, in one sense, be real (because of the real divergence between Judged and Judger) but it will also seem to be non-causal (because the divergence is projected onto the Judged system, and the two views show the Judged system behaving identically).

I will take up this important point in a later post: it is the very essence of hardism, and one of the major sources of the fallacy of pseudo-epiphenomenalism (a concept discussed previously on this blog but overdue for another post).

Finally, some authors have responded to the CRA by proposing that Searle’s set-up creates a virtual cognitive entity that is distinct from the mind of the operator. The missing understanding could reside in that virtual cognitive entity, even if the human operator cannot see it. The SEP entry refers to this as the Virtual Mind Reply. I think this label somewhat begs the question by supposing that an algorithm instantiated in cardboard can be accepted as a “mind”, which complicates the issues. Understanding, for instance, might be applicable to unconscious entities.

Nonetheless, despite reservations about the terminology. I think that this response, not the System Reply, is much closer to diagnosing the real flaw in the CRA. Only this response exposes the real reason that the CRA set-up is so overpowered that it would also diagnose a lack of understanding in a hyperintelligent AI, or even a neuron-by-neuron model of Searle himself. Only this response makes sense of the entire family of fallacious anti-physicalist arguments that similarly rest on the use of a false proxy.

What is missing from the “Virtual Mind Reply” (but surely understood by many of its proponents) is explicit consideration of why Searle and many of his readers were so quick to reject the understanding of the Room. It is not that they overlooked the fact that the Room constitutes its own cognitive entity; the status of the Room as a cognitive entity is exactly what is being assessed in the CRA and found wanting. The problem is that judgment is passed within the wrong cognitive system, and the evidence available to the Judger is vastly inadequate. When readers pass a dismissive judgment on the Room, they are actually empathising with a second cognitive system, the Operator, who has mundane, functional reasons for not understanding Chinese.

The Room might be 100% functionally reliant on the Operator, who is involved in every functional step. But the Operator is, in turn, 99.99% independent of the Room. The Operator interacts with the Room piecemeal, one tiny functional operation at a time, and judgment is passed outside that tiny point of intersection, within parts of the Operator’s cognition that are objectively isolated from the parts of the system that constitute understanding.

To be continued…

The next post considers this situation in more detail, laying out the basic argument for the False Proxy Reply to the CRA.

The post after that, due next week, will consider the entire family of arguments and philosophical positions that rely on the acceptance of an inappropriate cognitive proxy.

It turns out to be most of the favourite anti-physicalist arguments.

I love this article (only read half so far). The title is hilarious. The two slot idea is a great way to push back against the intuition pump. Another way is to imagine an operator that does speak Chinese: the room would be identical if the operator follows the rulebook

You sort of hinted at the overall issue with the Mill (and with all these thought experiments): we're judging consciousness based on an incomplete view of the system. Real consciousness is a whole system and so judging based on a small view of some gears. We can still judge whether a system is conscious from third person observation but we have to be careful to take a holistic view of the system